Aneesh Sathe

Jan 10, 2025 - AI Agents, Machiavelli's Study

January 10, 2025

Agents Are Not Enough #

Last year I was heavily experimenting with Knowledge Graphs because it’s been clear that LLMs by themselves fall short because of the lack of knowledge. This paper by Chirag Shah and Ryen White (you can click the heading above) from Dec 2024 expands on those shortcomings by exploring not just knowledge but also value generation, personalization, and trust.

They open the paper by casting a very wide definition of an “agent” everything from thermostats to LLM tools. While this seems facetious at first, their next point is interesting. Agents by definition “remove agency from a user in order to do things on the user’s behalf and save them time and effort.”. I think this is an interesting way to injext an LLM flavored principal agent problem into the Agentic AI conversation.

Their broad suggestion is to expand the ecosystem of agents by including “Sims”. Sims are simulations of the user which address

- privacy and security

- automated interactions and

- representing the interests of the user by holding intimate knowledge about the user

It’s a short easy read, if you have 10 min.

Machiavelli and the Emergence of the Private Study #

Infinite knowledge is available through the internet today. It is available trivially and, some, ahem, blogs make a performance of consuming it. Machiavelli used to

put on the garments of court and palace. Fitted out appropriately, I step inside the venerable courts of the ancients, where, solicitously received by them, I nourish myself on that food that alone is mine and for which I was born, where I am unashamed to converse with them and to question them about the motives for their actions, and they, in their humanity, answer me. And for four hours at a time I feel no boredom, I forget all my troubles, I do not dread poverty, and I am not terrified by death. I absorb myself into them completely.

Some folks have a private office, but an office is not a study. A study or, studiolo

in Italian, a precursor to the modern-day study — came to offer readers access to a different kind of chamber, a personal hideaway in which to converse with the dead. Cocooned within four walls, the studiolo was an aperture through which one could cultivate the self. After all, to know the world, one must begin with knowing the self, as ancient philosophy instructs. In order to know the self, one ought to study other selves too, preferably their ideas as recorded in texts. And since interior spaces shape the inward soul, the studiolo became a sanctuary and a microcosm. The study thus mediates the world, the word, and the self.

In the 1500s Michel de Montaigne writes:

We should have wife, children, goods, and above all health, if we can; but we must not bind ourselves to them so strongly that our happiness [tout de heur] depends on them. We must reserve a back room [une arriereboutique] all our own, entirely free, in which to establish our real liberty and our principal retreat and solitude.

A little later, Virginia Woolf points out what seems to be an eternal inequality by struggling to find “a room of one’s own”.

The enclosure of the study, for those of us lucky to have one, offers us a paradoxical sort of freedom. Conceptually, the studiolo is a pharmakon, a cure or poison for the soul. In its highest aspirations, the studiolo, as developed by humanists from Petrarch to Machiavelli to Montaigne, is a sanctuary for self-cultivation. Bookishness was elevated into a saintly virtue

The world today would perhaps be better off if more of us had our own studiolos.

Jan 9, 2025 - The Age of Fire and Gravel

January 9, 2025

Today I discovered the Public Domain Image Archive attached to the Public Domain Review which has some great essays and commentary on image collections, like the one below.

Utagawa Hiroshige: Last Great Master of Ukiyo-e #

Just before Hiroshige died, possibly of cholera, he wrote the following poem:

I leave my brush in the East

And set forth on my journey.

I shall see the famous places in the Western Land.

This was a fortelling of sorts because Hiroshige

was a hugely influential figure, not only in his homeland but also on Western painting. Towards the end of the nineteenth century, as a part of the trend in “Japonism”, European artists such as Monet, Whistler, and Cézanne, looked to Hiroshige’s work for inspiration, and a certain Vincent van Gogh was known to paint copies of his prints.

Los Angeles Burns #

Driven by global climate change, the Santa Ana “Devil” winds were intense this year parching the land.

Part of the reason for spread was the rerouting of funds away from the fire department which reduced the ability to respond appropriately.

Besides the immense personal damage, NASA’s JPL was shut down.

Though not all happy memories, LA was home once. It’s where I discovered my love bio bio and computers, not to mention the tremendous library system and the Tar Pits.

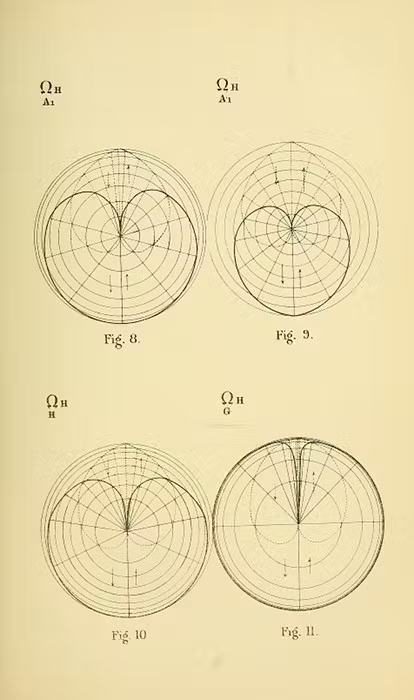

Rethinking Dose-Response Analysis with Bayesian Inference #

Analyzing dose-response experiments is tricky and the standard Marquardt-Levenberg algorithm “does not evaluate the certainty (or uncertainty) of the estimates nor does it allow for the statistical comparison of two datasets,”. This can lead to biased conclusions as a lot of subjectivity and wishful thinking has scope to creep in.

A Bayesian Approach?

The authors propose a Bayesian inference methodology that addresses these limitations. This approach can “characterize the noise of dataset while inferring probable values distributions for the efficacy metrics,”.

- It also allows for the statistical comparison of two datasets and can “compute the probability that one value is greater than the other”.

- Critically, it incorporates prior knowledge (and intution) through prior distributions: “The model incorporates the notion of intuition through prior distributions and computes the most probable value distribution for each of the efficacy metrics”.

Beyond Single Point Estimates

This method moves beyond single-point estimates, which can be misleading, and “explicitly quantifies the reliability of the efficacy metrics taking into account the noise over the data”. The goal is to help researchers “analyze and interpret dose–response experiments by including uncertainty in their reasoning and providing them a simple and visual approach to do so”.

So What?

By “considering distributions of probable values instead of single point estimates,” this Bayesian approach provides a more robust interpretation of your data. This is particularly important when dealing with noisy or unresponsive datasets, which can often occur in drug discovery.

Though their demo is offline, the code is available.

This was on repeat today

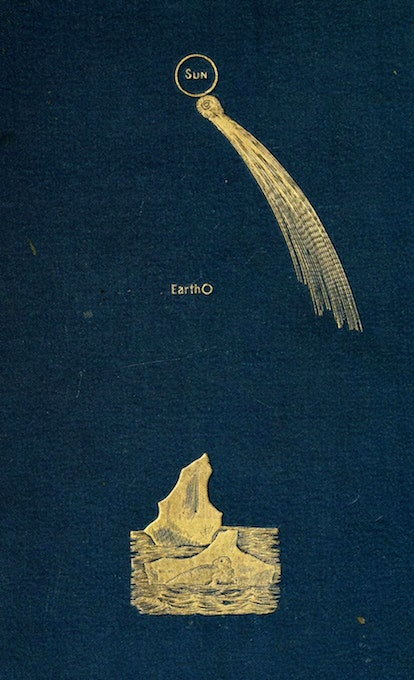

Image source: Book Cover: Ignatius Donnelly. Ragnarok: The Age of Fire and Gravel. New York, D. Appleton and Company, 1883

Jan. 8, 2025: Count your DIGITS! Drunk Bayesian

January 8, 2025

NVIDIA Project DIGITS #

Around 2015 I was putting together funds in academia. Convincing IT, senior professors, and finance that yes, it was worth giving me a LOT of cash to build a workstation with multiple GPUs.

…

“No, it isn’t for gaming.”

…

“Yes, it will change the world.”

…

“No, there are no university rules that hardware bought multiple invoices across multiple departments can’t be used in the same box.”

…

“Yes, I’m aware that all my individual quotes are just below the bureaucracy summoning purchase limits.”

…

“Yes I tried random forest with the other stats and ML methods, this really is better. How do I know? Well…”

Deep Learning was taking off, but in the biotech world it was seen as a regular tech update.

I did get the money and built that loud jet engine sounding monster. Among the first software I installed was DIGITS (freshly archived), this was just as keras had it’s release and all I wanted to do was to build a neural network. That little step changed my life.

Today NVIDIA announced hardware also named DIGITS with the claimed performance (or ever near there) it will be as life changing for the early explorers as the DIGITs software was for me.

The $3000 price tag is probably much better justified than it was to another little piece of hardware from last year. I hope to get my hands on a couple of these not just for LLMs but for my first love, images.

From the press release:

GB10 Superchip Provides a Petaflop of Power-Efficient AI Performance

The GB10 Superchip is a system-on-a-chip (SoC) based on the NVIDIA Grace Blackwell architecture and delivers up to 1 petaflop of AI performance at FP4 precision.

GB10 features an NVIDIA Blackwell GPU with latest-generation CUDA® cores and fifth-generation Tensor Cores, connected via NVLink®-C2C chip-to-chip interconnect to a high-performance NVIDIA Grace™ CPU, which includes 20 power-efficient cores built with the Arm architecture. MediaTek, a market leader in Arm-based SoC designs, collaborated on the design of GB10, contributing to its best-in-class power efficiency, performance and connectivity.

The GB10 Superchip enables Project DIGITS to deliver powerful performance using only a standard electrical outlet. Each Project DIGITS features 128GB of unified, coherent memory and up to 4TB of NVMe storage. With the supercomputer, developers can run up to 200-billion-parameter large language models to supercharge AI innovation. In addition, using NVIDIA ConnectX® networking, two Project DIGITS AI supercomputers can be linked to run up to 405-billion-parameter models.

Technically it’s not a proper petaflop at FP4 precision, but I’m ok with that kind of impropriety.

Drunk Bayesians and the standard errors in their interactors #

This video is from 2018 and of the interesting things Gelman touches on is the ability of AI to do model fitting automatically. Gelman argues that an AI designed to perform statistical analysis would also need to deal with the implications of Cantor’s diagonal.

Essentially, new models need to be built when new data don’t fit the old model. You go down the diagonal of more data vs increasing model complexity.

This means that an AI cannot have a full model ahead of time, and it must have different modules and an executive function and it must make mistakes. He suggests that AI needs to be more like a human, with analytical and visual modules, and an executive function, rather than a single, monolithic program

Perhaps we aren’t quite there yet but the emerging agentic methods are looking promising in light of his thoughts.

Some cool quotes below that I hope encourage you to watch this longish but lively window into one of the mind of one of the best known Bayesian statisticians.

-> I think that there’s something about statistics in the philosophy of statistics which is inherently untidy

-> …in Bayes you do inference based on the model and that’s codified and logical but then there’s this other thing we do which is we say our inferences don’t make sense we reject our model and we need to change it

-> Our statistical models have to be connected to our understanding of the world

On the reproducibility crisis in science in three parts:

Studies not replicating: This is the most obvious part of the crisis, where a study’s findings are not supported when the study is repeated with new data. This casts doubt on the original claims and the related literature and invalidate a whole sub-field

Problems with statistics: Gelman argues that some studies do not do what they claim to do, often due to errors in statistical analysis or research methods. He gives the example of a study about male upper body strength that actually measured the fat on men’s arms.

Three fundamental problems of statistics:

- generalizing from a sample to a population

- generalizing from a treatment to a control group, and

- generalizing from measurements to underlying constructs of interest

This last point is particularly interesting in the biotech space. Which brings us to,

Problems with substantive theory: Many studies lack a strong connection between statistical models and the real world. A better understanding of mechanisms and interactions is necessary for more accurate inferences. Gelman also discusses the “freshman fallacy,” where researchers assume a random sample of the population is not needed for causal inference, when in fact, it is crucial if the treatment effect varies among people (especially important if you are trying to discover drugs!). He further notes that the lack of theory and mechanisms lead to not being able to estimate interactions, which are crucial.

There are many more topics he covers from p-values and economics to bayesians not being bayesian enough.

As thanks for providing the source of the snowclone title here’s some Static

Image: Vintage European style abacus engraving from New York’s Chinatown. A historical presentation of its people and places by Louis J. Beck (1898). Original from the British Library. Digitally enhanced by rawpixel.

Jan. 7, 2025: Building Dwelling Thinking

January 7, 2025

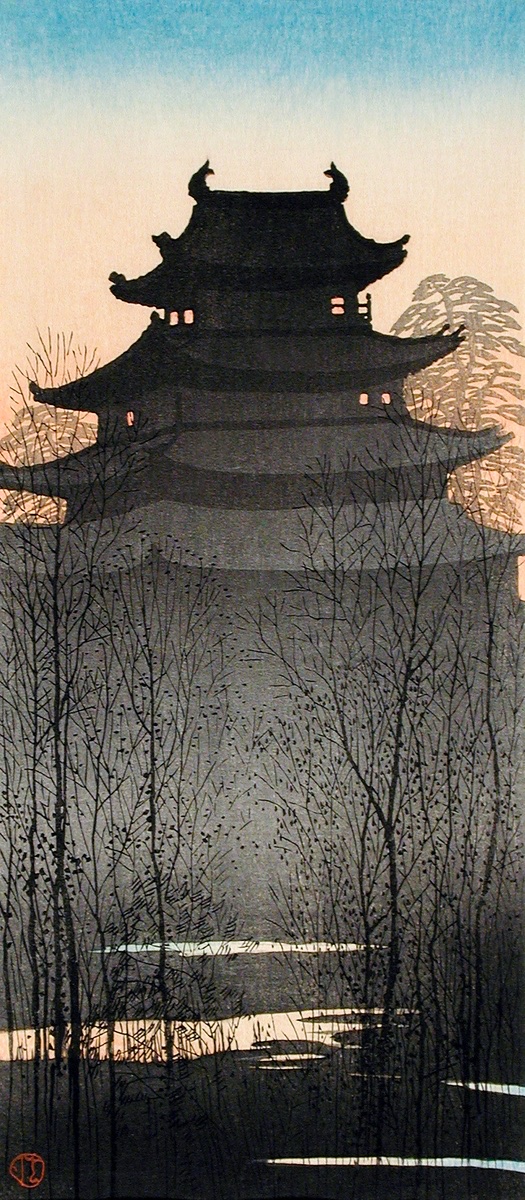

Today’s product builders and data scientists shape the way people see the world. The analysis, plots, and UI we create are places where others dwell. Not merely occupy but live and harness the mental space we give them access to. Martin Heiddeger wrote Building Dwelling Thinking(archive.org) in his 1971 book, Poetry Language Thought.

Man’s relation to locations, and through locations to spaces, inheres in his dwelling. The relationship between man and space is none other than dwelling, strictly thought and spoken

What Heidegger calls locations, I have thought of as places. Places are anything with order and purpose, as thought of by the user. Spaces are not so much the mathematical or physics concepts but more like domains ones. Such as the AI-space, or biotech-space. These spaces come into being as a result of thought extended from the boundaries of places to the explorations enabled by the affordances of said spaces.

A boundary is not that at which something stops but, as the Greeks recognized, the boundary is that from which something begins its presencing.

[…]

The location admits the and it installs the .

Heidegger addresses thinking only very briefly and from a distance. In the life of today’s knowledge worker thinking is everything. For the knowledge worker to be able to “dwell” they must be able to bring together the act of thinking and building. This is why good visualization, analysis that reveals rather than hides, and products that expand rather than limit the user’s ability are important.

Building and thinking are, each in its own way, inescapable for dwelling. The two, however, are also insufficient for dwelling so long as each busies itself with its own affairs in separation instead of listening to one another. They are able to listen if both building and thinking-belong to dwelling, if they remain within their limits and realize that the one as much as the other comes from the workshop of long experience and incessant practice.

To be able to free the user is critical. Everyone has their expertise and it us usually not in using your product. To make your place so convoluted that the user has to conform and constrict to be able to use it is not kind placemaking. At the beginning of the essay there is a definition of what it means “to free”

To free really means to spare. The sparing itself consists not only in the fact that we do not harm the one whom we spare. Real sparing is something positive and takes place when we leave something beforehand in its own nature, when we return it specifically to its being, when we “free” it in the real sense of the word into a preserve of peace. To dwell, to be set at peace, means to remain at peace within the free sphere that safeguards each thing in its nature. The fundamental character of dwelling is this sparing and preserving

My takeaway is that whenever a product/place is built it’s primary concern should be the freedom of the person expected to dwell there. The freedom you provide enables them to explore spaces they care about.

Image credit: Nagoya Castle (ca.1932) print in high resolution by Hiroaki Takahashi. Original from The Los Angeles County Museum of Art. Digitally enhanced by rawpixel.

Jan. 6, 2025

January 6, 2025

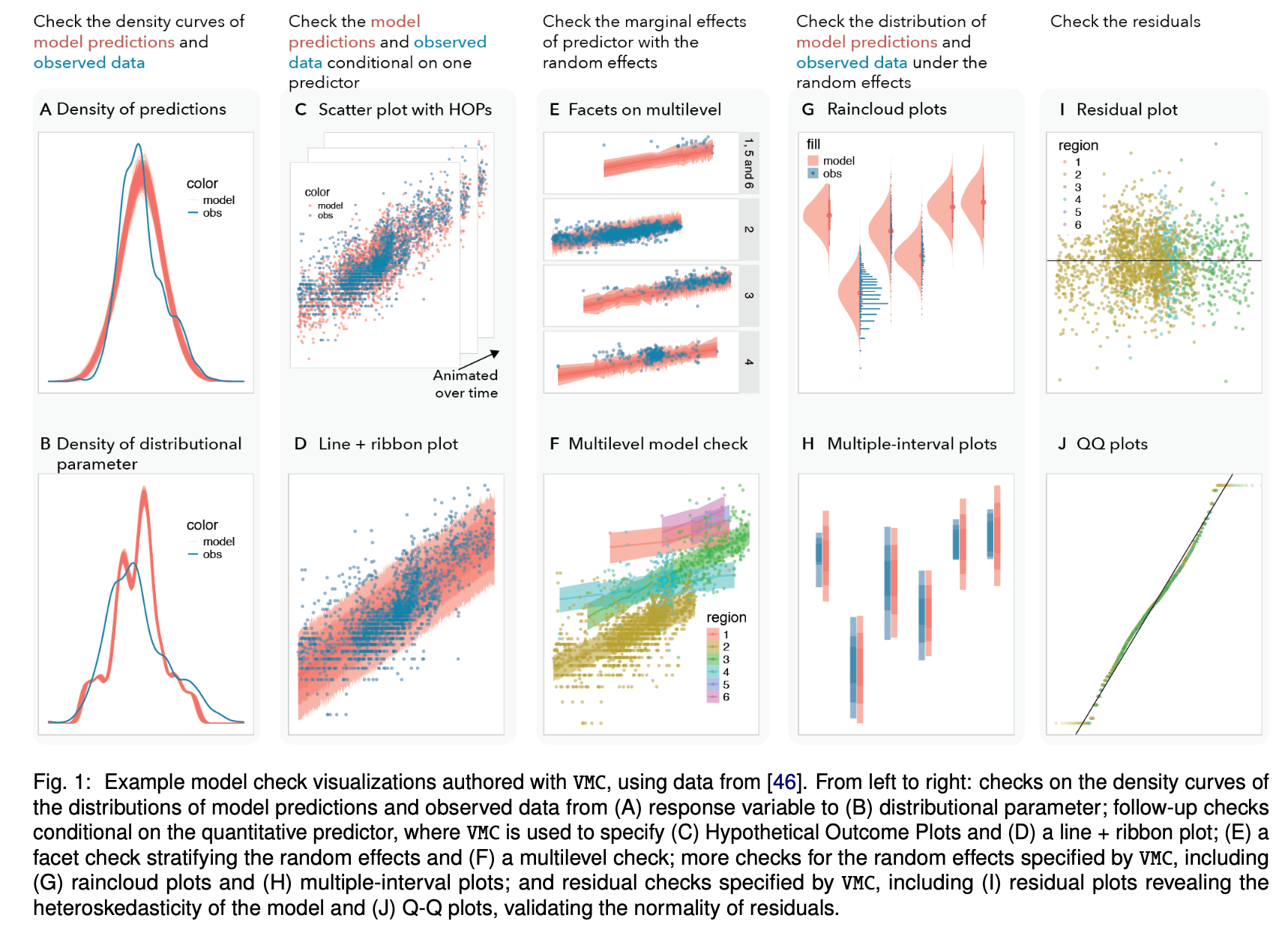

VMC: A Grammar for Visualizing Statistical Model Checks #

Data Scientists check how well a statistical model fits observed data with numerical and graphical checks. Graphical checks have a huge range outside of the well knowns like Q-Q plots. Scientists are of course limited by their training and experience, and it’s not trivial to arrive at effective model checks. Both programmatic and visual plotting tools require significant effort to generate new plots increasing the friction to do proper checks.

Work out of Jessican Hullman’s lab has created the VMC package (github) is a tool to easily access these methods and determine the quality of your model quickly. VMC is

a high-level declarative grammar for generating model check visualizations. VMC categorizes design choices in model check visualizations via four components: sampling specification, data transformation, visual representation(s), and comparative layout. VMC improves the state-of-the-art in graphical model check specification intwo ways:

(1) it allows users to explore a wide range of model checks through relatively small changes to a specification as opposed to more substantial code restructuring, and

(2) it simplifies the specification of model checks by defining a small number of semantically-meaningful design components tailored to model checking.

The work comes from a thoughtful place aiming not just to help out statisticians but to properly address and solve design considerations of a good tool, extending the wonderful familiy of tools that is ggplot2 built on the Grammar of Graphics.

Visualizations play a critical role in validating and improving statistical models. However, the design space of model check visualizations is not well understood, making it difficult for authors to explore and specify effective graphical model checks. VMC defines a model check visualization using four components:

- samples of distributions of checkable quantities generated from the model, including predictive distributions for new data and distributions of model parameters;

- transformations on observed data to facilitate comparison;

- visual representations of distributions;

- layouts to facilitate comparing model samples and observed data.

Pat Metheny: MoonDial #

The central vibe here is one of resonant contemplation. This guitar allows me to go deep. Deep to a place that I maybe have never quite gotten to before. This is a dusk-to-sunrise record, hard-core mellow.

I have often found myself as a listener searching for music to fill those hours, and honestly, I find it challenging to find the kinds of things I like to hear. As much “mellow” music as there is out there, a lot of it just doesn’t do the thing for me.

This record might offer something to the insomniacs and all-night folks looking for the same sounds, harmonies, spirits, and melodies that I was in pursuit of during the late nights and early mornings that this music was recorded.

The above is from Pat’s website. I discovered Pat Metheny relatively recently and have grown to like his music. Last year he released MoonDial which I picked up last week. It’s nice.

Check it out:

While I know nothing about musical instruments, the man is a proper geek:

Some years back, I had asked Linda Manzer, one of the best luthiers on the planet and one of my major collaborators, to build me yet another acoustic Baritone guitar, but this time one with nylon strings as opposed to the steel string version that I had used on the records One Quiet Night and What’s It All About.

My deep dive into the world of Baritone guitar began when I remembered that as a kid in Missouri, a neighbor had shown me a unique way of stringing where the middle two strings are tuned up an octave while the general tuning of the Baritone instrument remains down a 4th or a 5th. This opened up a dimension of harmony that had been previously unavailable to me on any conventional guitar.

There were never really issues with Linda’s guitar itself, but finding nylon strings that could manage that tuning without a) breaking or b) sounding like a banjo - was difficult.

Just before we hit the road, I ran across a company in Argentina (Magma) that specialized in making a new kind of nylon string with a tension that allowed precisely the sound I needed to make Linda’s Baritone guitar viable in my special tuning.

Lake bacteria evolve like clockwork with the seasons #

This article covers a pair of studies on bacteria and viruses in a lake.

researchers found that over the course of a year, most individual species of bacteria in Lake Mendota rapidly evolve, apparently in response to dramatically changing seasons.

Gene variants would rise and fall over generations, yet hundreds of separate species would return, almost fully, to near copies of what they had been genetically prior to a thousand or so generations of evolutionary pressures.

From the preprint of the virus paper:

In the evolutionary arms race between viruses and their hosts, “kill-the-winner” and other forms of dynamics frequently occur, causing fluctuations in the abundance of various viral strains55. Despite these fluctuations, certain viral species persist over extended periods and demonstrate high occurrence over time, indicating their evolutionary success in adapting to changing environmental conditions. These high occurrence viral species may represent a ‘royal family’ viral species in the model used to explain the “kill-the-winner” dynamics, where certain sub-populations with enhanced viral fitness have descendants that become dominant in subsequent “kill-the-winner” cycles. It is probable that these high occurrence viral species maintain a stable presence at the coarse diversity level while undergoing continuous genomic and physiological changes at the microdiversity level. The dynamics at the level of viral and host interactions play a pivotal role in driving viral evolution and maintaining the dominance of ‘royal family’ viral species.

Image credit: Sitting cat, from behind (1812) drawing in high resolution by Jean Bernard. Original from the Rijksmuseum. Digitally enhanced by rawpixel.