Aneesh Sathe

Variations on a grocery run

December 4, 2024

I.

Unruly, unmoored

A tempest of questions

In the car seat swrils

II.

The car seat is full

Of a tidal wave

Of question marks

III.

A flood of questions

Pool in the car seat

Doubting the universe

IV.

Between the tides of questions

The car seat is both

Raft and the sea

V.

Asleep

The cauldron of the car seat

Brews

Questions

Thank you, Olivia Dean — Dive

The Arrival of Composable Knowledge

June 12, 2024

Traversing through human history, even in the last two decades, we see a rapid increase in the accessibility of knowledge. The purpose of language, and of course all communication is to transfer a concept from one system to another. For humans this ability to transfer concepts has been driven by advancements in technology, communication, and social structures and norms.

This evolution has made knowledge increasingly composable, where individual pieces of information can be combined and recombined to create new understanding and innovation. Ten years ago I would have said being able to read a research paper and having the knowledge to repeat that experiment in my lab was strong evidence of this composability (reproducibility issues not withstanding).

Now, composability itself is getting an upgrade.

In the next essay I’ll be exploring the implications of the arrival of composable knowledge. This post is a light stroll to remind ourselves of how we got here.

Songs, Stories, and Scrolls #

In ancient times, knowledge was primarily transmitted orally. Stories, traditions, and teachings were passed down through generations by word of mouth. This method, while rich in cultural context, was limited in scope and permanence. The invention of writing systems around 3400 BCE in Mesopotamia marked a significant leap. Written records allowed for the preservation and dissemination of knowledge across time and space, enabling more complex compositions of ideas (Renn, 2018).

Shelves, Sheaves, and Smart Friends #

The establishment of libraries, such as the Library of Alexandria in the 3rd century BCE, and scholarly communities in ancient Greece and Rome, further advanced the composability of knowledge. These institutions gathered diverse texts and fostered intellectual exchanges, allowing scholars to build upon existing works and integrate multiple sources of information into cohesive theories and philosophies (Elliott & Jacobson, 2002).

Scribes, Senpai, and Scholarship #

During the Middle Ages, knowledge preservation and composition were largely the domain of monastic scribes who meticulously copied and studied manuscripts. The development of universities in the 12th century, such as those in Bologna and Paris, created centers for higher learning where scholars could debate and synthesize knowledge from various disciplines. This was probably when humans shifted perspective and started to view themselves as apart from nature (Grumbach & van der Leeuw, 2021).

Systems, Scripts and the Scientific Method #

The invention of the printing press by Johannes Gutenberg in the 15th century revolutionized knowledge dissemination. Printed books became widely available, drastically reducing the cost and time required to share information. This democratization of knowledge fueled the Renaissance, a period marked by the synthesis of classical and contemporary ideas, and the Enlightenment, which emphasized empirical research and the scientific method as means to build, refine, share knowledge systematically (Ganguly, 2013).

Silicon, Servers, and Sharing #

The 20th and 21st centuries have seen an exponential increase in the composability of knowledge due to digital technologies. The internet, open access journals, and digital libraries have made vast amounts of information accessible to anyone with an internet connection. Tools like online databases, search engines, and collaborative platforms enable individuals and organizations to gather, analyze, and integrate knowledge from a multitude of sources rapidly and efficiently. There have even been studies which allow, weirdly, future knowledge prediction (Liu et al., 2019).

Conclusion #

From oral traditions to digital repositories, the composability of knowledge has continually evolved, breaking down barriers to information and enabling more sophisticated and collaborative forms of understanding. Today, the ease with which we can access, combine, and build upon knowledge drives innovation and fosters a more informed and connected global society.

Dancing on the Shoulders of Giants

May 10, 2024

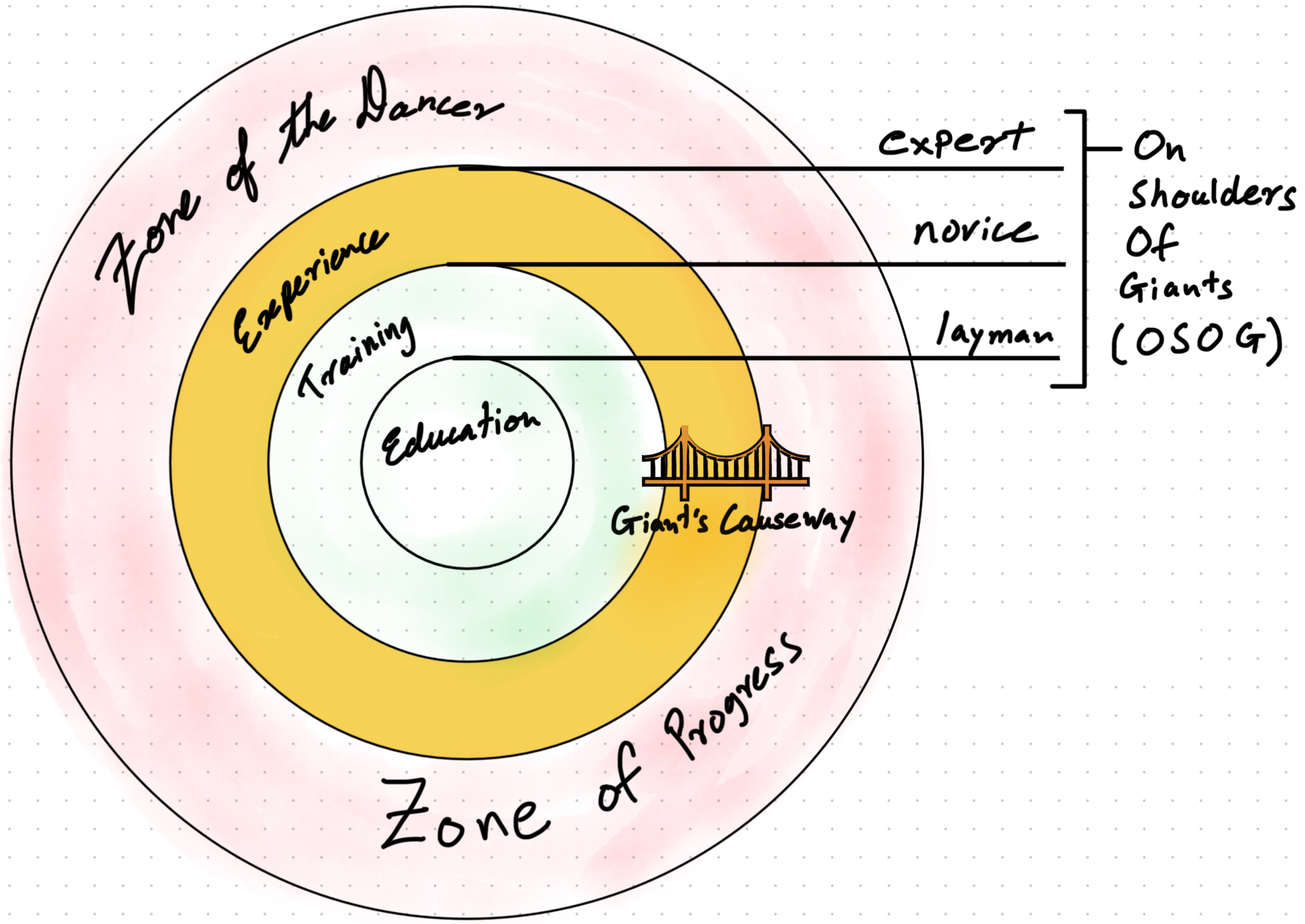

In Newton’s era it was rare to say things like “if I have seen further, it is by standing on the shoulders of giants” and actually mean it. Now it’s trivial. With education, training, and experience, professionals always stand “on shoulders of giants” (OSOG). Experts readily solve complex problems but the truly difficult ones aren’t solved through training. Instead, a combination of muddling through and the dancer style of curiosity is deployed, more on this later. We have industries like semiconductors, solar, and gene sequencing with such high learning rates that the whole field seems to ascend OSOG levels daily.

These fast moving industries follow Wright’s Law. Most industries don’t follow Wright’s law due to friction against discovering and distributing efficiencies. In healthcare regulatory barriers, high upfront research costs, and resistance to change keeps learning rates low. Of course, individuals have expert level proficiencies, many with private hacks to make life easier. Unfortunately, the broader field does not benefit from individual gains and progress is made only when knowledge trickles down to the level of education, training, and regulation.

This makes me rather unhappy, and I wonder if even highly recalcitrant fields like healthcare could be nudged into the Wright’s law regime.

No surprise that I view AI being central, but it’s a specific cocktail of intelligence that has my attention. Even before silicon, scaling computation has advanced intelligence. However, we will soon run into limits of scaling compute and the next stage of intelligence will need a mixed (or massed, as proposed by Venkatesh Rao). Expertise + AI Agents + Knowledge Graphs will be the composite material that will enable us not just to see further, but to bus entire domains across what I think of as the Giant’s Causeway of Intelligence.

Lets explore the properties of this composite material a little deeper, starting with expertise and it’s effects.

An individual’s motivation and drive are touted as being the reason behind high levels of expertise and achievement. At best, motivation is an emergent phenomenon, a layer that people add to understand their own behavior and subjective experience (ref, ref). Meanwhile, curiosity is a fundamental force. Building knowledge networks, compressing them, and then applying them in flexible ways is a core drive. Everyday, all of us (not just the “motivated”) cluster similar concepts under an identity then use that identity in highly composable ways (ref).

There are a few architectural styles of curiosity that are deployed. ‘Architecture’ is the network structure of concepts and connections uncovered during exploration. STEM fields have a “hunter” style of curiosity, tight clusters and goal directed. While great for answers, the hunter style has difficulty making novel connections. Echoing Feyerabend’s ‘anything goes’ philosophy, novel connections require what is formally termed as high forward flow. An exploration mode where there is significant distance between previous thoughts and new thoughts (ref). Experts don’t make random wild connections when at the edge of their field but control risk by picking between options likely to succeed, what has been termed as ‘muddling through’.

Stepping back, if you consider that even experts are muddling at the edges then the only difference between low and high expertise is their knowledge network. The book Accelerated Expertise, summarized here, explores methods of rapidly extracting and transmitting expertise in the context of the US military. Through the process of Cognitive Task Analysis expertise can be extracted and used in simulations to induce the same knowledge networks in the minds of trainees. From this exercise we can take away that expertise can be accelerated by giving people with base training access to new networks of knowledge.

Another way to build a great knowledge network is through process repetition, you know… experience. These experience/learning curves predict success in industries that follow Wright’s Law. Wright’s Law is the observation that every time output doubles the cost of production falls by a certain percentage. This rate of cost reduction is termed as the learning rate. As a reference point, solar energy drops in price by 20% every time the installed solar capacity doubles. While most industries benefit from things like economies of scale they can’t compete with these steady efficiency gains. Wright’s Law isn’t flipped on through some single lever but emerges through the culture right from the factory floor all the way up to strategy.

There are shared cultural phenomena that underlie the experience curve effect:

- Labor efficiency - where workers are more confident, learn shortcuts and design improvements.

- Methods improvement, specialization, and standardization - through repeated use the tools and protocols of work improve.

- Technology-driven learning - better ways of accessing information and automated production increases rates of production.

- Better use of equipment - machinery is used as full capacity as experience grows

- Network effects - a shared culture of work allows people to work across companies with little training

- Shared experience effects - two or more products following a similar design philosophy means little retraining is needed.

Each of these is essentially a creation, compression, and application of knowledge networks. In fields like healthcare efficiency gain is difficult because skill and knowledge diffusion is slow.

Maybe, there could be an app for that…

Knowledge graphs (KGs) are databases but instead of a table they create a network graph, capturing relationships between entities where both the entities and the relationship have metadata. Much like the mental knowledge networks built during curious exploration, knowledge graphs don’t just capture information like Keanu → Matrix but more like Keanu -star of→ Matrix. And all three, Keanu, star of, and Matrix have associated properties. In a way KGs are crystalized expertise and have congruent advantages. They don’t hallucinate and are easy to audit, fix, and update. Data in KGs can be linked to real world evidence enabling them to serve as a source of truth and even causality, a critical feature for medicine (ref).

Medicine pulls from a wide array of domains to manage diseases. It’s impossible for all the information to be present in one mind, but knowledge graphs can visualise relationships across domains and help uncover novel solutions. Recently projects like PrimeKG have combined several knowledge graphs to integrate multimodal clinical knowledge. KGs have already shown great promise in fields like drug discovery and leading hospitals, like Mayo Clinic, think that they are the path to the future. The one drawback is poor interactivity.

LLMs meanwhile are easy to interact with and have wonderful expressivity. Due to their generative structure LLMs have zero explainability and completely lack credibility. LLMs are a powerful, their shortcomings make them risky in applications like disease diagnosis. The right research paper and textbooks trump generativity. Further, the way that AI is built today can’t fix these problems. Methods like fine-tuning and retraining exists, but they require massive compute which is difficult to access and quality isn’t guaranteed. The current ways of building AI, throwing in mountains of data into hot cauldrons of compute and stirred with the network of choice (mandatory xkcd), ignores the very accessible stores of expertise like KGs.

LLMs (and really LxMs) are the perfect complement to KGs. LLM can access and operate KGs in agentic ways making understanding network relationships easy through natural language. As a major benefit, retrieving an accurate answer from KGs is 50x cheaper than generating one. KGs make AI explainable “by structuring information, extracting features and relations, and performing reasoning” (ref). With easy update and audit abilities KGs can easily disseminate know-how. When combined with a formal process like expertise extraction, KGs could serve as a powerful store of knowledge for institutions and even whole domains. We will no longer have to wait a generation to apply breakthroughs.

Experts+LxMs+KGs are the composite material to accelerate innovation and lower costs of building the next generation of intelligence. We have seen how experts are always trying to have a more complete knowledge network with high compression and flexibility allowing better composability. The combination of knowledge graphs and LLMs provide the medium to stimulate dancer like exploration of options. This framework will allow high-proficiency but not-yet-experts to cross the barrier of experience with ease. Instead of climbing up a giant, one simply walks The Giant’s Causeway. Using a combination of modern tools and updated practices for expertise extraction we can accelerate proficiency even in domains which are resistant to Wright’s Law unlocking rapid progress.

****

Appendix

Diving a little deeper into my area of expertise, healthcare, a few ways where agents and KGs can help:

Reimagining AI in Healthcare: Beyond Basic RAG with FHIR, Knowledge Graphs, and AI Agents

May 3, 2024

Introduction #

While exploring the application of AI agents in healthcare we see that standard Retrieval-Augmented Generation (RAG) and fine-tuning methods often fall short in the interconnected realms of healthcare and research. These traditional methods struggle to leverage the structured knowledge available, such as knowledge graphs. Data approaches like Fast Healthcare Interoperability Resources (FHIR) used alongside advanced knowledge graphs can significantly enhance AI agents, providing more effective and context-aware solutions.

The Shortcomings of Standard RAG in Healthcare #

Traditional RAG models, designed to pull information from external databases or texts, often disappoint in healthcare—a domain marked by complex, interlinked data. These models typically fail to utilize the nuanced relationships and detailed data essential for accurate medical insights (GitHub) (ar5iv).

Leveraging FHIR and Knowledge Graphs #

FHIR offers a robust framework for electronic health records (EHR), enhancing data accessibility and interoperability. Integrated with knowledge graphs, FHIR transforms healthcare data into a format ideal for AI applications, enriching the AI’s ability to predict complex medical conditions through a dynamic use of real-time and historical data (ar5iv) (Mayo Clinic Platform).

Enhancing AI with Advanced RAG Techniques #

Advanced RAG techniques utilize detailed knowledge graphs covering diseases, treatments, and patient histories. These graphs underpin AI models, enabling more accurate and relevant information retrieval and generation. This capability allows healthcare providers to offer personalized care based on a comprehensive understanding of patient health (Ethical AI Authority) (Microsoft Cloud).

Implementing AI Agents in Healthcare #

AI agents enhanced with RAG and knowledge graphs can revolutionize diagnosis accuracy, patient outcome predictions, and treatment optimizations. These agents offer actionable insights derived from a deep understanding of individual and aggregated medical data (SpringerOpen).

A Novel Approach: RAG + FHIR Knowledge Graphs #

Integrating RAG with FHIR-knowledge graphs to significantly enhance AI capabilities in healthcare. This method maps FHIR resources to a knowledge graph, augmenting the RAG model’s access to structured medical data, thus enriching AI responses with verified medical knowledge and patient-specific information. View the complete notebook in my AI Studio.

Challenges and Future Directions #

While promising, integrating FHIR, knowledge graphs, and advanced RAG with AI agents in healthcare faces challenges such as data privacy, computational demands, and knowledge graph maintenance. These issues must be addressed to ensure ethical implementation and stakeholder consideration (MDPI).

Conclusion #

Integrating FHIR, knowledge graphs, and advanced RAG techniques into AI agents represents a significant advancement in healthcare AI applications. These technologies enable a precision and understanding previously unattainable, promising to dramatically improve care delivery and management as they evolve.

If you’re in the field or exploring applying AI, do get in touch!

On Being Good vs. Knowing Good: Perspectives on AI

January 22, 2024

In this video, Stephen Fry narrates Nick Cave’s letter, which argues that using ChatGPT as a shortcut to creativity is detrimental. Surprisingly, I found myself in agreement. Having been involved in the AI industry for over a decade, I’ve always viewed AI positively. As an entrepreneur who pitches AI to investors and customers, I liken AI to technologies like spreadsheets: they eliminate tedious tasks, but you still need to understand what you’re doing.

I use various contemporary AI tools daily for tasks ranging from creating ISO template documents to drafting reference letters. However, when I’ve tried using AI as a thinking partner or advisor, it has fallen short, primarily due to its inability to discern or have taste.

Expertise involves having taste – the ability to distinguish good from bad, one decision from another. We depend on experts and advisors not just for their knowledge, but for their ability to guide us optimally, sometimes even questioning our intended goals. They speak confidently amid uncertainty, drawing on their experience.

ChatGPT/LLMs and their generative counterparts exhibit confidence but, by design, lack real-world experience.

My agreement with the video’s sentiment stems not from an inherent issue with computational tools, but from the understanding that taste develops through experience, however imperfect. Just as we learn arithmetic before using calculators, and calculators before spreadsheets, we need to cultivate a new culture around these emerging tools.

In mission-critical fields like healthcare, balancing exploration and regulation is crucial. Over-regulation can hinder society from benefiting from innovative uses and discoveries, while a lack of regulation places undue risk on vulnerable populations, as seen in historical clinical trials

I envision a path where doctors integrate AI into their workflows with enthusiasm yet maintain high standards. Unlike drug development and other biotech fields, AI and its software can be corrected relatively easily. Creating a selective environment will drive quality.

Users who recognize what is good will elevate the collective ability to achieve excellence.